BigWorld Technology 2.1. Released 2012.

Copyright © 1999-2012 BigWorld Pty Ltd. All rights reserved.

This document is proprietary commercial in confidence and access is restricted to authorised users. This document is protected by copyright laws of Australia, other countries and international treaties. Unauthorised use, reproduction or distribution of this document, or any portion of this document, may result in the imposition of civil and criminal penalties as provided by law.

Table of Contents

- 1. Overview

- 2. User Input

- 3. Cameras

- 4. Terrain

- 5. Cloud shadows

- 6. Chunks

- 7. Entities

- 8. User Data Objects

- 9. Scripting

- 10. Models

- 11. Animation System

- 12. Integrating With BigWorld Server

- 13. Server Communications

- 14. Particles

- 15. Detail Objects

- 16. Water

- 17. Graphical User Interface (GUI)

- 18. Fonts

- 19. Input Method Editors (IME)

- 20. BigWorld Web Integration

- 21. Sounds

- 22. 3D Engine (Moo)

- 23. Post Processing

- 24. Job System

- 25. Debugging

- 26. Releasing The Game

- 27. Shared Development Environments

Table of Contents

This document is a technical design overview for the Client Engine for 3d engine Technology. It is part of a larger set of documentation describing the whole system. It only includes references to the BigWorld Server in order to provide context. Readers interested only in the workings of the BigWorld Client may ignore the server information.

The intended audience is technical-typically MMOG developers and designers.

For API-level information, please refer to the online documentation.

Note

For details on BigWorld terminology, see the document Glossary of Terms.

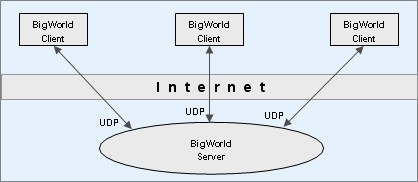

The BigWorld Client provides the end-user experience of the BigWorld client/server architecture. In a BigWorld client/server implementation, the client connects to the server using UDP/IP over the Internet.

The BigWorld Client presents the user with a realistic and immersive 3D environment. The contents of that environment are a combination of static data stored with the client, and dynamic data sent from the server. The user interacts with the world through an avatar (character) that he or she controls. The movement and actions of that avatar are relayed to the server. The avatars controlled by other connected users are part of the dynamic data sent from the server.

Client perspective of BigWorld system. Note that the BigWorld server is not just one machine, although the client can treat it as such.

Developers may choose to integrate the client with their own server technology, but if they do, they will have to address problems already tackled by the BigWorld architecture, like:

Uniform collision scene (used on client and server).

Uniform client/server scripting (used on client and server).

Tools that produce server and client content.

Optimised low bandwidth communication protocol.

The client initialises itself, connects to the server, and then runs in its main thread a standard game loop (each iteration of which is known as a frame):

Receive input

Update world

Draw world

Each step of the frame is described below:

Input

Input is received from attached input devices using DirectInput. In a BigWorld client/server implementation, input is also received over the network from the server using WinSock 2.

Update

The world is updated to account for the time that has passed since the last update.

Draw

The world is drawn with the 3D engine Moo, which uses Direct3D (version 9c). For details, see 3D Engine (Moo).

A number of other objects also fall into the world's 'update then draw' system. These include a dozen related to the weather and atmospheric effects (rain, stars, fog, clouds, etc.), various script assistance systems (targeting, combat), pools of water, footprints, and shadows.

There are other threads for background scene loading and other asynchronous tasks.

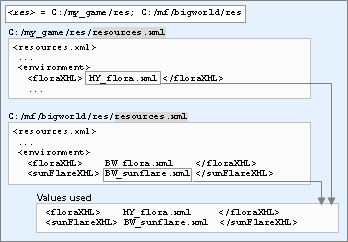

The BigWorld client is a generic executable that is fully configurable via the game specific resources. The location of these resources must be supplied to the client so that it can initialise correctly.

Typically, at least two resource paths need to be specified - your project specific resource path and the BigWorld resource path (which supplies common resources such as standard shaders, fonts, scripts, etc). For example, if your game was located in "my_game", the two resource paths you would define are:

my_game/resbigworld/res

When the engine tries to access a resource, it will look in each

resource tree in the order that they are given to the engine. As an

example, if the client scripts request the resource named

sets/models/foo.model it tries the following

locations:

my_game/res/sets/models/foo.modelbigworld/res/sets/models/foo.model

The search will stop at the first valid file found. As such, it is

possible to override resources specified in bigworld/res by

placing it in the same location within my_game/res.

There are two ways search paths can be specified:

paths.xml is an XML file which the engine will look

for on startup and contains a list of resource paths. The client will

first try to open paths.xml in the current working

directory. If it cannot be found in the current directory, then it will

try to open paths.xml in the same folder as the client

executable. The schema of paths.xml looks like this:

<root>

<Paths>

<Path>../../my_game/res</Path>

<Path>../../bigworld/res</Path>

</Paths>

</root>

Note

Paths are defined relative to the location of

paths.xml.

By default the client will look for the paths.xml

illustrated above. However, this can be overridden via the command line

using the --res switch. Multiple paths are semi-colon

separated. For example,

"bwclient.exe" --res ../../../my_game/res;../../../bigworld/res

Note

These paths must be defined relative to the executable location, not the current working directory.

Configuration files are defined relatively to one of the entries in

the resources folders list (or

<res>). For details on

how BigWorld compiles this list, see Resource search paths

This file defines game-specific resources that are needed by the client engine to run.

The entries in resources.xml are read from the various entries in

the resources folders list (or

<res>), in the order

in which they are listed. Only missing entries will have their values

read in subsequent folders.

A default file exists under folder bigworld/res, and any of its

resources may be overridden by creating your own file

<res>/resources.xml.

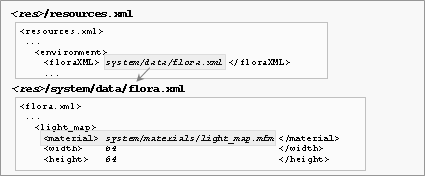

The example below illustrates this mechanism:

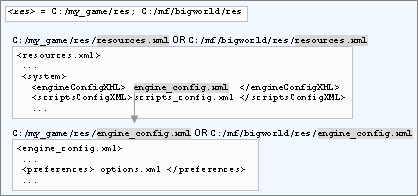

Precedence of your game's resources.xml file over BigWorld's ones

For a complete list of the resources and values used by BigWorld, refer to the resources.xml file provided with the distribution.

The XML file

<engine_config>.xml

lists several engine configuration options.

The actual name and location of this file is defined by the

resources.xml's

<engineConfigXML> tag. The location of the

resources.xml file is always defined relative to

one of the entries in the resources directories list (or

<res>), which will

be searched in the order in which they are listed.

An example follows below:

Locating <engineConfigXML>'s file

Under the main section of the XML file, a personality tag must be included, naming the personality script to use.

Several other tags are used by BigWorld to customise the way the client runs. For a complete list of the supported tags and a description of their functions, refer to the engine_config.xml file provided with the distribution. Additional information can also be found in the Client Python API.

The data contained in this file is passed to the personality script as the second argument to the init method (for details, see init), in the form of a DataSection object.

The XML file

<scripts_config>.xml

can be used to configure the game scripts. It has no fixed grammar, and

its form can be freely defined by the script programmer.

The actual name and location of this file is defined by the

resources.xml's scriptsConfigXML

tag — its location is always defined relative to one of the entries in

the resources folders list (or

<res>), which will

be searched in the order in which they are listed.

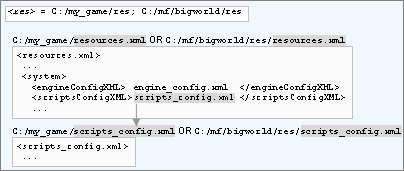

Locating scriptsConfigXML's file

The data contained in this file is passed to the personality script as the first argument to the init method (for details, see init), in the form of a DataSection object.

The XML file

<preferences>.xml is

used to save user preferences for video and graphics settings, with a

pre-defined grammar.

The file also embeds a data section (called scriptsPreference) that can be used by scripts to persist game preferences — there is no fixed grammar for this section.

The actual name and location of this file is defined in file specified by resources.xml's engineConfigXML tag, in the preferences tag.

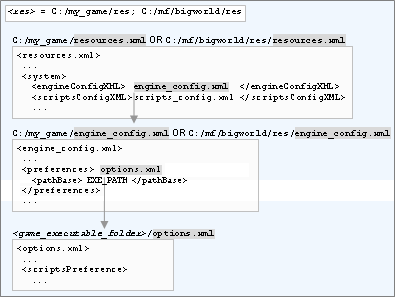

Locating engineConfigXML's preferences' file

By default the preferences XML file is relative to the client

executable location, but this can be changed to a number of other base

paths by specifying a pathBase subtag (e.g. it can be

defined to be relative to the user's My Documents directory). The base

path can be defined as an optional sub-tag of the preferences tag. The

available path bases for <preferences>.xml are:

EXE_PATH— The preferences XML file will be stored relative to the location of the client executable. This is the default location if none is supplied.CWD— The preferences XML file will be stored relative to the current working directory. Note that if the working directory changes during runtime, it will save in the new working directory.ROAMING_APP_DATA— The preferences XML file will be stored relative to the current user's roaming AppData directory. In other words, if the user is on a domain the data will be syncronised with the domain controller when the user logs in and out of Windows.LOCAL_APP_DATA— The preferences XML file will be stored relative to the current user's local AppData directory.APP_DATA— This is the same asROAMING_APP_DATA.MY_DOCS— The preferences XML file will be stored relative to the current user's My Documents directory.MY_PICTURES— The preferences XML file will be stored relative to the current user's My Pictures directory.RES_TREE— The preferences XML file will be stored relative to the first resource path found inpaths.xml.

The data contained in the scriptsPreference of this file is passed to the personality script as the third argument to the init method (for details, see init), in the form of a DataSection object.

The current user preferences can be saved back into the file (including changes to the DataSection that represents the script preferences) by calling BigWorld.savePreferences. For details, see the Client Python API .

BigWorld uses a left-handed coordinate system. The x-axis points "left", the y-axis points "up" and the z-axis points "forward".

yaw is rotation around the y-axis. Positive is to the right, negative is to the left.

pitch is rotation around the x-axis. Positive is nose pointing down, negative is nose pointing up.

roll is rotation around the z-axis. Positive is to the left, negative is to the right.

Table of Contents

The BigWorld client uses a combination of Windows messages and the Windows raw input API for keyboard and mouse input. It reads key-up, key-down, and character press events from the keyboard as well as high resolution movement events from the mouse. It uses the DirectInput API to read button and axis movement events from joysticks.

Key events, encapsulated by the KeyEvent object

(BigWorld.KeyEvent in Python), are generated by devices that

have keys or buttons. This includes the keyboard, mouse buttons, and

joystick buttons.

The two basic types of key events are key-down and key-up.

If a KeyEvent is generated by the keyboard, it may have a

character attached to it. The character generated by a particular key is

determined by the currently set locale and input language in the

operating system, and is represented by the

KeyEvent.character member (a Unicode string).

Dead character keys are supported (e.g. in Spanish, a user can type the letter é by first pressing the apostrophe key followed by the e key). In this case the first key press will not have a character associated with it, and the second key press will have the final character.

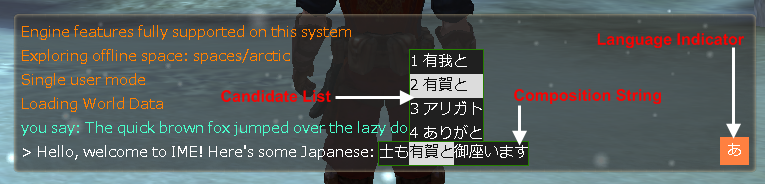

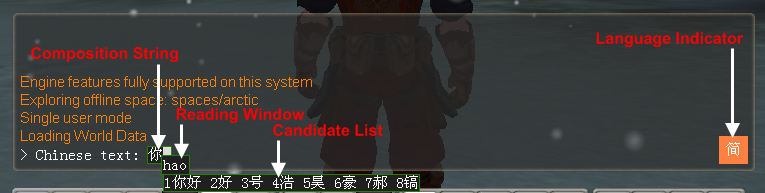

The BigWorld client supports advanced Input Method Editors (IME). See Input Method Editors (IME) for details on using an IME in your game.

The keyboard will generate auto-repeat events when keys are held down, based on the user's operating system settings (e.g. repeat delay). The mouse and joystick do not generate auto-repeat events.

Auto-repeat events are sent as additional key-down events, however

scripts that do not want to handle repeat events can call the

KeyEvent.isRepeatedEvent method to determine whther or not

it is an auto-repeat event.

When the user presses a button (keyboard, mouse or joystick), the sequence of events are:

The first key-down event.

If the key is held down, multiple key-down events are raised due to auto-repeat (keyboard only).

A key-up event is triggered when the user releases the button.

The output from the user input module is processed by a number of other modules, which take it in turn to examine events and either consume or ignore them. If an event is not consumed by any module then it is discarded. The order of modules that get a turn at the events is as follows:

Debug — Special keys, consoles, etc.

Personality script — Global keys.

Application — Hard-coded keys such as QUIT.

Player script — The rest, which is the major part of the processing.

Note

Note that the GUI system does not automatically receive input,

instead it is up to the script write to choose when. This could be

either in the personality script, or in the player script. The most

obvious place is in the personality script callbacks, for example in

the personality script's handleKeyEvent, you should call

GUI.handleKeyEvent() and check the return value.

Similarly, the active camera also does not automatically receive input events. It is up to the Python scripts to decide when and where the camera receives user input.

The BigWorld client performs event matching to ensure consistent module behaviour. If a key-down event is consumed by a module, that module's identifier is recorded as the sink of the event's key number. When the corresponding auto-repeat and key-up event arrives, it is delivered directly to that module. For example, if a chat console is brought up (and inserted into the list) while the player is running, and the user subsequently releases the run key, then the player script will still get the key-up event for that key, and be able to stop the run action.

In some cases it may be desired to temporarily block certain key

events from being passed into the scripts. For example, the GUI scripts

may handle a key down event by removing the current GUI screen and

replacing it with a new GUI screen. By default, since the new GUI screen

has become the active screen by the time handleKeyEvent

returns, any associated auto-repeat and key-up events will be posted to

the new GUI screen creating possibly unwanted behaviour.

The BigWorld.sinkKeyEvents function can be used to

stop all key events for the given key-code from reaching the scripts

until (and including) the next key-up. See the Python API guide for

details.

It is often a requirement to know where the mouse was when a key

event occured (i.e. rather than where the mouse is at the time of

handling the event), especially when processing mouse button events.

Therefore, the mouse cursor position is available via the

KeyEvent.cursorPosition property, and should be used

instead of GUI.mcursor().position where ever

possible.

High resolution mouse movement events are sent to the scripts as a

MouseEvent. This object exposes three direction

deltas.

The

dxanddymembers are signed integers indicating movement of the mouse in the X and Y directions.The

dzmember represents movement of the mouse wheel.Note

If multiple mouse deltas arrive from the driver within a single frame, they are accumulated into a single

MouseEvent.

Similar to the KeyEvent object, the mouse cursor

position for when the event occured is available as a member of the

MouseEvent object.

Mouse buttons are sent as a KeyEvent, however they do

not generate auto-repeat events. See Key events

for details.

The BigWorld client will automatically detect the first joystick device attached to the system, and is been designed to be used with dual-stick style joypads.

When axis events occur, they will be sent to the scripts as an

AxisEvent object via the handleAxisEvent

personality script function.

Joystick buttons are sent as a KeyEvent, however they

do not generate auto-repeat events. See Key events for details.

The C++ engine will automatically pass axis events to the active

cursor, so the direction cursor can be joystick controlled by setting it

as the active cursor using BigWorld.setCursor. The

direction cursor will process any events generated by the right

axis.

The C++ engine will also give the physics subsystem a chance to handle axis events. The physics treats axis input as a special case and will scale movement speed by how far the user as pushed the joystick forward.

In order to enable joystick support on movement physics, set the

Physics.joystickEnabled property to True and be sure to set

joystickFwdSpeed and joystickBackSpeed

properties to values appropriate for your game.

Table of Contents

The placement of the camera update within the general update process is a delicate matter, because the camera depends on some components having been updated before it, whilst other components depend on the camera being updated before them.

Conceptually, there are four types of camera: fixed camera, FlexiCam, cursor camera, and free camera. The first three are client-controlled views, ranging from minimum user interaction to maximum user interaction. The last camera is completely user-controlled, but is not part of actual game play.

There are however just three camera classes:

FlexiCam, CursorCamera, and

FreeCamera. The fixed camera is implemented with a

FlexiCam object. They all derive from a common

BaseCamera class.

There is only ever one active camera at a time. The personality script usually handles camera management, since the camera is a global, but any script can also manipulate the camera, and the player script often does (although usually indirectly, through the personality script)

The base class and all the derived classes are fully accessible to Python. Any camera can be created and set to whatever position a script desires, including to the position of another camera. This is particularly useful when switching camera types to remove any unwanted 'jump-cuts'.

The cursor camera is a camera that follows the character in the game world. It always positions itself on a sphere centred on the character's head. It works primarily with the direction cursor so as to face the camera in the direction of the character's head. You may use any MatrixProvider in place of the direction cursor, and you may use any MatrixProvider in place of the player's head.

The direction cursor is an input handler that translates device input from the user into the manipulation of an imaginary cursor that travels on an invisible sphere. This cursor is described by its pitch and yaw. It produces a pointing vector extending from the head position of the player's avatar in world space, in the direction of the cursor's pitch and yaw. The direction cursor is a useful tool to allow a targeting method across different devices. Rather than have each device affect the camera and character mesh, each device talks to the direction cursor, affecting its look-at vector in the world. The cursor camera, target tracker, and action matcher then read the direction cursor for information on what needs to be done.

The cursor camera takes the position of the direction cursor on the sphere, extends the line back towards the character's head, and follows that line until it intersects with the sphere on the other side. This intersection point is the cursor camera's position. The direction of the camera is always that of the direction cursor.

The cursor camera is an instance of the abstract concept InputCursor. There can be only one active InputCursor at any time, and BigWorld automatically forwards keyboard, joystick, and mouse events to it. Upon startup, the cursor camera is the active InputCursor by default. You can change the active InputCursor at any time, using the method BigWorld.setCursor (for example to change the InputCursor to be a mouse pointer instead).

The Free Camera is a free roaming camera that is neither tied to a fixed point in space nor following the player's avatar. It is controlled by the mouse (for direction) and keyboard (for movement), and allows the user to fly about the world. The free camera has inertia in order to provide smooth, gradual transitions in movement. It is not a gameplay camera, but is useful for debugging, development, and demonstration of the game.

The FlexiCam is a flexible camera that follows the character in the game world. It always positions itself at a specified point, relative to the character orientation, and always looks at a specified orientation, relative to the character's feet direction.

It is called FlexiCam because it has a certain amount of elasticity to its movement, allowing the sensation of speed to be visualised. This makes it especially useful for chasing vehicles.

The terrain system employed by the BigWorld client integrates neatly with the chunking system. It allows a wide variety of terrains to be created in an artistic manner and managed efficiently. There are two different terrain renderers available that target different machine specifications. They are called Advanced Terrain and Simple Terrain.

The advanced terrain engine uses a height map split up into blocks of 100 by 100 metres. Each block consists of height and texture information, normals, a hole map and LOD information.

Configurable height map resolution

Unlimited number of texture levels with configurable blend resolution and projection angles

Configurable normal map resolution

Configurable hole map resolution

Per pixel lighting

LOD System

Geo mip-mapping with geo-morphing

Normal map LOD

Texture LOD

Height map LOD

Texturing is done by blending multiple textures layers together, each texture layer has its own projection angle and blend values for blending with other texture layers. The resolution of the blend values is configurable per space and the layers themselves are stored per chunk. The textures are assumed to be rgba with the alpha channel used for the specular value.

The lighting of the advanced terrain is performed per pixel. A normal map is stored per block, which is used in the lighting calculations, this is combined with the blended texture to output the final colour. The terrain allows up to 8 diffuse and 6 specular lights per block. For details of how the specular lighting is calculated see Terrain specular lighting

The terrain uses a horizon shadow map for shadowing, this map stores two angles (east-west) between which there is an unobstructed view of the sky from the terrain. In the terrain shader, these angles are checked against the sun angle and the sun light is only applied if the sun angle falls between the horizon angles.

The purpose of the LOD system is to reduce the amount of cpu and gpu time spent rendering terrain and to reduce the memory footprint of the terrain. The terrain LOD system achieves this by reducing geometric and texture detail in the distance and loading/unloading high resolution resources as they are needed. The LODing is broken up by resource so that texture and geometric detail can be streamed separately. The LOD distances are configurable in the space.settings file, please see terrain section in space.settings for more information.

Geometry LOD is achieved by using geo-mipmaps and geo-morphing. Geo-mipmaps are generated from the high resolution normal map for the terrain block. Depending on the x/z distance from the camera a lower resolution version of the terrain block is displayed. To avoid popping when changing between the different resolutions of the height map, geo-morphing is used, this allows the engine to smoothly interpolate between two height map levels. Degenerate triangles are inserted between blocks of differing sizes to avoid sparkles.

Collision geometry is streamed in using two distinct resolutions. The low resolution collisions are always available, whereas the higher resolution collisions are streamed in depending on their x-z distance from the camera.

Texture LOD is performed by substituting the multi-layer blending with a single top-down image of the terrain block. The LOD image is smoothly blended in based on the x-z distance from the camera. The top-down image is generated in the World Editor.

Normal map LOD is performed by using low-resolution and high resolution maps. The low resolution normal map is always available and the high resolution map is streamed in and blended based on the x-z distance from the camera. The normal maps are generated in the World Editor. The size of the LOD normal map is a 16th of the resolution of the normal map or 32x32 whichever value is larger.

Since the advanced terrain allows for a number of configuration options the memory footprint of the terrain depends on the options selected.

In the Fantasydemo example provided, the terrain overhead is as follows (this information was captured using the resource counters in the Fantasydemo client, the graphics settings were set to high and the far plane was set to 1500 metres):

(this includes the textures used by the texture layers, which may also be used by other assets)

| Component | Size |

|---|---|

| Collision data | 42,453,517 |

| Vertex buffers | 6,169,008 |

| Index buffers | 536,352 |

| Texture layers | 57,541,905 |

| Shadow maps | 26,361,856 |

| LOD textures | 35,148,605 |

| Hole maps | 4,096 |

| Normal maps | 6,572,032 |

| Total | 174,787,371 |

Terrain memory usage

A terrain2 section is contained in a chunk's .cdata file. It contains all the resources for the terrain in a chunk. The different types of terrain data are described in BNF format in the following chapter.

The heights sections stores the height map for the terrain block. Multiple heights sections are stored in the block, one for each LOD level, each heights section stores data at half the resolution of the previous one. The heights sections are named as " heights? " where ? is replaced by a number. The highest res height map is stored in a section named heights the second highest in a section called heights1 all the way down to a map that stores 2x2 heights. This way if the height map resolution is 128x128, 7 height maps are stored in the file (heights, heights1, ... heights6)

<heightMap> ::= <header><heightData> <header> ::= <magic><width><height><compression><version><minHeight><maxHeight><padding>

<magic>

uint32 0x00706d68 (string "hmp\0")

<width>

uint32 containing the width of the data

<height>

uint32 containing the height of the data

<compression>

(unused) uint32 containing the compression type

<version>

uint32 containing the version of the data, currently 4

<minHeight>

float containing the minimum height of this block

<maxHeight>

float containing the maximum height of this block

<padding>

4 bytes of padding to make the header 16-byte aligned

<heightData>

PNG compressed block of int32 storing the height in millimetres, dimensions = width * height from the header

The layer sections store the texture layers for the terrain block. Multiple layer sections are stored in the terrain block. Each section describes one texture layer. The layer sections are named " layer ? " where ? is replaced by a number greater than 1. I.e if the block has 3 layers, three layer sections will be stored ("layer 1", "layer 2", "layer 3")

<textureLayer> ::= <header><textureName><blendData> <header> ::= <magic><width><height><bpp><uProjection><vProjection><version><padding> <textureName> ::= <length><string>

<magic>

uint32 0x00646c62 (string bld/0")

<width>

uint32 containing the width of the data

<height>

uint32 containing the height of the data

<bpp>

(unused) uint32 containing the size of the entries in the layer data

<uProjection>

Vector4 containing the projection of the u coordinate of the texture layer

<vProjection>

Vector4 containing the projection of the v coordinate of the texture layer

<version>

uint32 containing the version of the data, currently 2

<padding>

12 bytes of padding to make the header 16-byte aligned

<length>

the length of the texturename string

<string>

the name of the texture used by this layer

<blendData>

png compressed block of uint8 defining the strength of this texture layer at each x/z position

The normals section stores the high resolution normal map for the terrain block. The lodNormals section stores the LOD normals for the height block, the LOD normals are generally 1/16th of the size of the normals.

<normals> ::= <header><data> <header> ::= <magic><version><padding>

<magic>

uint32 0x006d726e (string "nrm/0")

<version>

uint32 containing the version of the data, currently 1

<padding>

8 bytes of padding to make the header 16-byte aligned

<data>

png compressed block storing 2 signed bytes per entry for the x and z components of the normal the y component is calculate in the shader

The holes section stores the holemap for the terrain block, this section is only stored when a terrain block has holes in it.

<holes> ::= <header><data> <header> ::= <magic><width><height><version>

<magic>

uint32 0x006c6f68 (string "hol/0")

<width>

uint32 containing the width of the data

<height>

uint32 containing the height of the data

<version>

uint32 containing the version of the data, currently 1

<data>

The hole data stored in a bit field of width * height, each row in the data is rounded up to 1 byte. If a bit is set to 1 it denotes a hole in the map.

The horizonShadows section stores the horizon shadows for the terrain block.

<shadows> ::= <header><data> <header> ::= <magic><width><height><bpp><version><padding>

<magic>

uint32 0x00646873 (string "shd/0")

<width>

uint32 containing the width of the data

<height>

uint32 containing the height of the data

<bpp>

(unused)uint32 containing the bits per entry in the data

<version>

uint32 containing the version of the data, currently 1

<padding>

12 bytes of padding to make the header 16-byte aligned

<data>

The shadow data, (uint16,uint16) * width * height, the horizon shadow data stores two angles between which there is no occlusion from any terrain or objects.

The lodTexture.dds section stores the LOD texture for the terrain block. The LOD texture is a low resolution snapshot of all the texture layers blended together. The texture is stored in the DXT5 format. For more information about the dds texture format please refer to the DirectX documentation.

The dominantTextures section stores the dominant texture map. The dominant texture map stores the texture with the highest blend for each x/z location in the terrain block.

<dominant> ::=<header><texNames><data> <header> ::= <magic><version><numTextures><texNameSize><width><height><padding>

<magic>

uint32 0x0074616d (string "mat/0")

<version>

uint32 containing the version of the data, currently 1

<numTextures>

uint32 containing the number of textures referenced by the dominant texture map

<texNameSize>

uint32 containing the size of the texture entries

<width>

uint32 containing the width of the data

<height>

uint32 containing the height of the data

<padding>

8 bytes of padding to make the header 16-byte aligned

<texNames>

numTextures entries of texNameSize size containing the names of the dominant textures referred to in this map. Texture names shorter than texNameSize are padded with 0

<data>

stored as a compressed bin section. byte array of width * height, each entry is an index into the texture names which indexes the dominant texture at the x/z location of the entry

The terrain section in the space.settings file contains the configuration options for the terrain. The values in the lodInfo and server sections can be modified, but the root level values should only be modified by the World Editor.

<version> 200 (int) </version>

<heightMapSize> uint </heightMapSize>

<normalMapSize> uint </normalMapSize>

<holeMapSize> uint </holeMapSize>

<shadowMapSize> uint </shadowMapSize>

<blendMapSize> uint </blendMapSize>

<lodInfo>

<startBias> float </startBias>

<endBias> float </endBias>

<lodTextureStart> float </lodTextureStart>

<lodTextureDistance> float </lodTextureDistance>

<blendPreloadDistance> float </blendPreloadDistance>

<lodNormalStart> float </lodNormalStart>

<lodNormalDistance> float </lodNormalDistance>

<normalPreloadDistance> float </normalPreloadDistance>

<defaultHeightMapLod> uint </defaultHeightMapLod>

<detailHeightMapDistance> float </detailHeightMapDistance>

<lodDistances>

+<distance?> float </distance?>

</lodDistances>

<server>

<heightMapLod> uint </heightMapLod>

</server>

</lodInfo> <version>

The version of the terrain, this value is 200 for advanced terrain

<heightMapSize>

The size of the height map per terrain block, this value is a power of 2 between 4 and 256

<normalMapSize>

The size of the normal map per terrain block, this value is a power of 2 between 32 and 256

<holeMapSize>

The size of the hole map per terrain block, this can be any value up to 256

<shadowMapSize>

The size of the shadow map per terrain block, this value is a power of 2 between 32 and 256

<blendMapSize>

The size of the blend maps per terrain block, this value is a power of 2 between 32 and 256

<lodInfo>

This section contains the configurations for the terrain LOD system

<startBias>

This value is the bias value for the start of geo-morphing, this value defines where a LOD level starts fading out to the next one. This value is a factor of the difference between two lodDistances.

<endBias>

This value is the bias value for the end of geo-morphing, this value defines where a LOD level has fully faded out to the next one. This value is a factor of the difference between two lodDistances.

<lodTextureStart>

This is the start distance for blending in the LOD texture, up until this distance, the blended layers are used for rendering the terrain.

<lodTextureDistance>

This is the distance the lodtexture is blended in over, this value relative to lodTextureStart.

<blendPreloadDistance>

This is the distance at which the blends are preloaded, this value is relative to lodTextureDistance and lodTextureStart

<lodNormalStart>

This is the start distance for blending in the LOD normals

<lodNormalDistance>

This is the distance the full normal map is blended in over, this value relative to lodNormalStart.

<normalPreloadDistance>

This is the distance at which the full normal maps are preloaded. This value is relative to lodNormalStart and lodNormalDistance

<defaultHeightMapLod>

This is the default LOD level of height map to load, 0 = the full height map, 1 = half resolution, 2 = quarter resolution etc.

<detailHeightMapDistance>

This is the distance at which the full height map is loaded

<lodDistances>

This section contains the geometry LOD distances.

<distance>

The distance sections define the distances at which each geometry LOD level is blended out. distance0 is for the first LOD level, distance1 for the second LOD level etc. The distance between LOD levels must be at least half the diagonal distance of a terrain block (~71), this is because we only support a difference of 1 LOD level between neighbouring blocks.

<server>

This section contains the information used by the server

<heightMapLod>

This defines which LOD level to load on the server, this value is used to speed up loading on the server.

Works with the chunking system.

Individual grid squares addressable from disk and memory.

Based on a 4x4 metre grid (tiles), which matches portal dimensions.

Low memory footprint.

Low disk footprint.

Fast rendering.

Automatic blending.

Easy tool integration.

Layered terrain with tiled textures for easy strip creation.

Uses texture projection to apply texture coordinates to save vertex memory.

The terrain is a huge height map defined by a regular grid of height poles, every 4x4 metres. Terrain is organised into terrain blocks of 100x100 metres. Each of these blocks can have up to four textures, which are blended on a per-vertex (i.e., per-pole) basis. The terrain also is self-shadowing, and allows holes to be cut out of it, for things like cave openings. The terrain also contains detail information, so that the detail objects can be matched to the terrain type.

The terrain integrates properly with the chunking: each terrain block is 100x100 metres, which is the size of the outside chunks. The terrain blocks are stored in separate files, so that they can be opened as needed.

Each terrain block covers one chunk, each with dimension of 100x100 metres. It contains 28x28 height, blend, shadow, and detail values (there are two extra rows and one column to allow for boundary interpolation). Each terrain block also stores 25x25 hole values, one for each 4x4m tile.

The table below display the terrain cost per chunk.

| Component | Size calculation | Size |

|---|---|---|

| Headers | 256 (header) + 128 x 4 (texture names) | 768 |

| Height | 28 x 28 x sizeof( float ) | 3,136 |

| Blend | 28 x 28 x sizeof( dword ) | 3,136 |

| Shadow | 28 x 28 x sizeof( word ) | 1,568 |

| Detail | 28 x 28 x sizeof( byte ) | 784 |

| Hole | 25 x 25 x sizeof( bool ) | 625 |

| Total | 10,017 | |

Terrain cost per chunk

For example, for a 15x15 km world the total disk size of the terrain would be: 10,017 x 150 x 150 ~ 215MB.

With a field of view of 500m, and each terrain block covering 100x100 metres, a typical scene would require roughly 160 terrain blocks in memory at any one time.

The memory usage of this much terrain is about 2MB, plus data management overheads.

The TerrainTextureSpacing tag included in the

environment section of file

<res>/resources.xml

(for details on the precedence of entries in the various copies of file

resources.xml, see File resources.xml) determines

the size (in metres) to which texture map will be stretched/shrunk when

applied to the terrain.

This value determines both the length and height of the texture tile.

The equation for the specular lighting is:

SpeClr = TerSpeAmt * ( (SpeDfsAmt * TerDfsClr) + SunClr) * SpeRfl

The list below describes each variable:

SpeClr (Specular colour)

Final colour reflected.

TerSpeAmt (Specular amount)

Value is given by the weighted blend of the alpha channel of the 4 terrain textures.

SpeDfsAmt (Specular diffuse amount)

Initial value is stored in the variable specularDiffuseAmount in the effect file bigworld/res/shaders/terrain/terrain.fx.

Its value can be tweaked during runtime via the watcher render/terrain/specularDiffuseAmount.

Once the desired result is achieved, the new value can be stored in the effect file.

TerDfsClr (Terrain diffuse colour)

Value is given by the weighted blend of the RGB channels of the 4 terrain textures.

SunClr (Sunlight colour)

Colour impinged by sunlight.

SpeFlr (Specular reflection)

Specular reflection value at the given pixel, adjusted by the Specular power coefficient

As a result of the formula, a small amount of the Terrain diffuse colour (TerDfsClr) is added to the Sunlight colour (SunClr) to give the Specular colour (SpeClr).

The initial value of the power of specular lighting is stored in the variable specularPower in the effect file bigworld/res/shaders/terrain/terrain.fx. Its value can be tweaked during runtime via the watcher render/terrain/specularPower. Once the desired result is achieved, the new value can be stored in the effect file.

Note that the Specular power can only be adjusted for shader hardware version 2.0 and later. Earlier versions of shader hardware are limited to a Specular power value of 4 (which is the default for shader hardware version 2.0 and later).

Note

The final amount of specular lighting applied to the terrain is affected by the variable specMultiplier in file bigworld/res/shaders/terrain/terrain.fx.

Set it to anything other than 1 to rescale the specular lighting, or 0 to completely disable it.

Note

Terrain specular lighting can be turned off via TERRAIN_SPECULAR graphics settings.

For details, see Graphics settings.

All objects in the BigWorld client engine that are drawn outside are affected by cloud shadows. This effect is applied per-pixel, and is performed using a light map-stored as a texture feed in the engine that is projected onto the world.

This light map is exposed to the effects system via macros defined in the file bigworld/res/shaders/std_effects/stdinclude.fxh.

By default, the texture feed is named skyLightMap, and therefore is accessible to Python via the command:

BigWorld.getTextureFeed( "skyLightMap" )

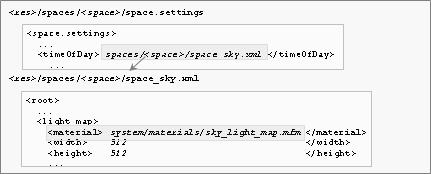

Information for the sky light map is found in the sky XML file, which

is defined in the file

<res>/spaces/<space>/space.settings

(for details on this file's grammar, see the document File Grammar Guide's section space.settings) for the

given space. The parameters are the same as in any BigWorld light

map.

Cloud shadowing requires one extra texture layer per material. While the fixed-function pipeline supports this for most materials, cloud shadowing on bump-mapped and specular objects requires more than four sets of texture coordinates, meaning that for bump-mapped objects it will only work on Pixel Shader 2 and above.

The sky light map is calculated by the code in C++ file src/lib/romp/sky_light_map.cpp, and is updated by the sky module during draw.

It is exposed to the effect engine via automatic effect constants. The light map is updated only when a new cloud is created, or the current set of clouds has moved more than 25% downwind.

Between updates, the projection texture coordinates are slid by the wind speed so that the cloud shadows appear to move with the clouds. All effect files incorporate the cloud shadowing effect, including the terrain and flora.

There are two effect constants exposed to effect files to aid with sky light mapping:

SkyLightMapTransform

Sets the "World to SkyLightMap" texture projection.Use this constant to convert x,z world vertex positions to u,v texture coordinates.

SkyLightMap

Exposes the sky light map texture to effect files.

There are several macros in file bigworld/res/shaders/std_effects/stdinclude.fxh that assist with integrating cloud shadowing into your effect files.

These macros are described in the list below:

BW_SKY_LIGHT_MAP_OBJECT_SPACE, BW_SKY_LIGHT_MAP_WORLD_SPACE

These macros declare the variables and constants required for the texture projection, including:

World space camera position.

Sky light map transform.

The sky light map itself.

When using an effect file that performs lighting in object space (for example, if you are also using the macro DIFFUSE_LIGHTING_OBJECT_SPACE), use the variation BW_SKY_LIGHT_MAP_OBJECT_SPACE, and that will have the world matrix declared.

BW_SKY_LIGHT_MAP_SAMPLER

This macro declares a sampler object that is used when implementing cloud shadows in a pixel shader.

BW_SKY_MAP_COORDS_OBJECT_SPACE, BW_SKY_MAP_COORDS_WORLD_SPACE

These macros perform the texture projection on the given vertex position, and set the texture coordinates into the given register.

Be sure to pass the positions in the appropriate reference frame, depending on which set of macros you are using.

BW_TEXTURESTAGE_CLOUDMAP

This macro defines a texture stage that multiplies the previous stage's result by the appropriate cloud shadowing value.

It should be used after any diffuse lighting calculation, and before any reflection or specular lighting.

SAMPLE_SKY_MAP

This macro samples the sky light map in a pixel shader, and returns a 1D-float value representing the amount by which you should multiply your diffuse lighting value.

After the light map is calculated based on the current clouds, it is clamped to a maximum value. This means that the cloud map can never get too dark, or have too great an effect on the world.

For example, even if the sun is completely obscured by clouds during the day, there will still be enough ambient lighting and illumination from the cloud layer itself such that sunlight still takes effect.

The BigWorld watcher Client Settings/Clouds/max sky light map darkness sets the maximum value that the sky light map can have. A value of 1 means that the sky light map is able to completely obscure the sun (full shadowing). The default value of 0.65 represents the BigWorld artists' best guess at the optimal value for cloud shadowing. A value of 0 would mean there is never any effect of cloud shadows on the world.

This value is also read from the file sky.xml in the light map settings. It is represented by the maxDarkness tag. For details on the sky light map settings file, see Sky light map.

Table of Contents

The scene graph drawn by the BigWorld client is built from small convex chunks of space. This has many benefits including easy streaming, reduced loading times, concurrent world editing, and facilitation of server scene updates and physics checking.

The concepts and implementation of the chunking system are described in the following sections.

The following terms are related to the BigWorld chunking system:

A space is a continuous three-dimensional Cartesian medium. Each space is divided piecewise into chunks, which occupy the entire space but do not overlap. Every point in the space is in exactly one chunk. A space is split into columns of 100x100 metres in the horizontal dimensions, and total vertical range. Examples of separate spaces include planets, parallel spaces, space stations, and 'detached' apartment/dungeon levels.

A chunk is a convex three-dimensional volume. It contains a description of the scene objects that reside inside it. Scene objects include models, lights, entities, terrain blocks, etc, known as chunk items. It also defines the set of planes that form its boundary.

Note

The outside chunk of a column is exempt from this — it needs only define the four planes to adjacent column. The boundary planes of other chunks overlapping that grid square are used to build a complete picture of the division of space inside it.

Some planes have portals defined on them, indicating that a neighbouring chunk is visible through them.

A portal is a polygon plus a reference to the chunk that is visible through that polygon. It includes a flag to indicate whether it permits objects to pass through it. Some portals may be named so that scripts can address them, and change their permissivity.

The following files are used by the chunking system:

One space.settings file for each space in the universe (XML format)

For details on this file's grammar, see the document File Grammar Guide's section space.settings.

<res>/spaces/<space>/space.settings

Environment settings

Bounding rectangle of grid squares

Multiple .chunk files for each space (XML format)

For details on this file's grammar, see the document File Grammar Guide's section .chunk.

<res>/spaces/<space>/XXXXZZZZo.chunk (o = outside)

<res>/spaces/<space>/CCCCCCCCi.chunk (i = inside)

List of scene objects

Texture sets used

Boundary planes and portals (including references to visible chunks)

Collision scene

Multiple .cdata files for each space (binary format)

<res>/spaces/<space>/XXXXZZZZ.cdata

Terrain data such as:

Height map data

Overlay data

Textures used

— or —

Multiple instances of lighting data for each object in the chunk:

Static lighting data

A colour value for each vertex in the model

Includes are transparent after being loaded (to client, server,

and scripts). Label clashes are handled by appending

'_n' to labels, where

N is the number of objects

with that label already.

Includes are expanded inline where they are encountered, and do not need to have a bounding box for the purposes of the client or server.

The World Editor does not generate includes.

Material overrides and animation declarations remain the domain of model files. For more details, see Models.

Only entities that are implicitly instantiated need to have their ID field filled in. If it is zero or is missing, then the entity is assigned a unique ID from either the client's pool (if it is a client-instantiated entity) or the creating cell's pool (if it is a server-instantiated entity).

If an entity needs a label, it must include the label as a property in its formal type definition.

The special chunk identifier heaven may be used if only the sky (gradient, clouds, sun, moon, stars, etc...) is to be drawn there. Similarly with earth, if the terrain ought to be drawn. Therefore, outside chunks will have six sides, with the heaven chunk on the top and the earth chunk on the bottom.

The absence of a chunk reference in a portal means it is unconnected and that nothing will be drawn there.

If a chunk is included inside another, then its boundary planes are ignored — only things like its includes, models, lights, and sounds are used.

An internal portal means that the specified boundary is not an actual boundary, but instead that the space occupied by the chunk it connects to (and all chunks that that chunk connects to) should be logically subtracted from the space owned by this chunk, as defined by its non-internal boundaries. This was originally intended only for 'outside' chunks to connect to 'inside' chunks, but it may be readily adapted for 'interior portals', the complement to 'boundary portals'.

In a portal definition, the vAxis for the 2D polygon points is found by the cross product of the normal with uAxis.

In boundary definitions, the normals should point inward.

Everything in the chunk except the bounding box is interpreted in the local space of the chunk (as specified in the top-level transform section).

At every frame, the Chunk Manager performs a simple graph traversal of all the chunks it has loaded, looking for new chunks to load. It follows the portals between chunks, keeping track of how far it has 'travelled' in its scan. Its scan is limited to the maximum visible distance, i.e., a little further than the far plane distance.

The closest unloaded chunk it finds on this traversal is the chunk that is loaded next. Loading is done in a separate thread, so it does not interfere with the running of the game. Similarly, any chunks that are beyond the reach of the scan are candidates for ejecting (unloading).

The focus grid is a set of columns surrounding the camera position. Each column is 100x100metres and is aligned to the terrain squares and outside chunks. The focus grid is sized to just exceed the far plane.

For a far plane of 500m, for example, the focus grid goes 700m in each direction, making for 14 x 14 = 196 columns total.

The set of columns in the focus grid is dependent on the camera position. As the camera moves, the focus grid disposes columns that are no longer under the grid and 'focuses' on ones that have just come close enough.

Each column contains a hull tree and a quad tree.

A hull tree is a kind of binary-space partitioning tree for convex hulls. It can handle hulls that overlap. It can do point tests and proper line traversals.

The hull tree is formed from the boundaries of all the chunks that overlap the column. From this tree, the chunk that any given point lies in can be quickly determined. (e.g., the location of the camera)

The quad tree is made up of the bounding boxes (or, potentially, any other bounding convex hull) of all the obstacles that overlap the column. This tree is used for collision scene tests. Chunk items are responsible for adding and implementing obstacles. Currently only model and terrain chunk items add any obstacles.

If a chunk or obstacle is in more than one column, it is added to the trees of both columns.

Using its focus grid of obstacle quad trees, the chunk space class can sweep any 3D shape through its space, and report all the triangle collisions to a callback object. The currently supported 3D shapes are points and triangles, but any other could be added with very little difficulty.

The bottommost level of collision checking is handled by a generic obstacle interface, so any conceivable obstacle could be added to this collision scene, as long as it can quickly determine when another shape collides with it (in its own local coordinates).

For more details, see file bigworld/src/client/physics.cpp.

A sway item is a chunk item that is swayed by the passage of other chunk items. Whenever a dynamic chunk item moves, any sway items in that chunk get the sway method called on them, specifying source and destiny of movement.

Currently the only user of this is ChunkWater. It uses movements that pass through its surface to make ripples in the water. This is why the ripples work for any kind of dynamic item — from dynamic obstacles/moving platforms to player models to small bullets.

In mf/src/lib/chunk/chunk_water.cpp:

/** * Constructor */ ChunkWater::ChunkWater() : ChunkItem( 5 ),

pWater_( NULL )

{

}

...

/**

* Apply a disturbance to this body of water

*/

void ChunkWater::sway( const Vector3 & src, const Vector3 & dst )

{

if (pWater_ != NULL)

{

pWater_->addMovement( src, dst );

}

}

pWater_( NULL )

{

}

...

/**

* Apply a disturbance to this body of water

*/

void ChunkWater::sway( const Vector3 & src, const Vector3 & dst )

{

if (pWater_ != NULL)

{

pWater_->addMovement( src, dst );

}

}

In mf/src/lib/chunk/chunk_item.hpp:

... class ChunkItem : public SpecialChunkItem { public: ChunkItem( int wantFlags = 0 ) : SpecialChunkItem( wantFlags ) { } }; ... typedef ClientChunkItem SpecialChunkItem; ... class ClientChunkItem : public ChunkItemBase { public: ClientChunkItem( int wantFlags = 0 ) : ChunkItemBase( wantFlags ) { } }; ... ChunkItemBase( int wantFlags = 0 ); bool wantsDraw() const { return !!(wantFlags_ & 1); } bool wantsTick() const { return !!(wantFlags_ & 2); } bool wantsSway() const { return !!(wantFlags_ & 4); } bool wantsNest() const { return !!(wantFlags_ & 8); }

Table of Contents

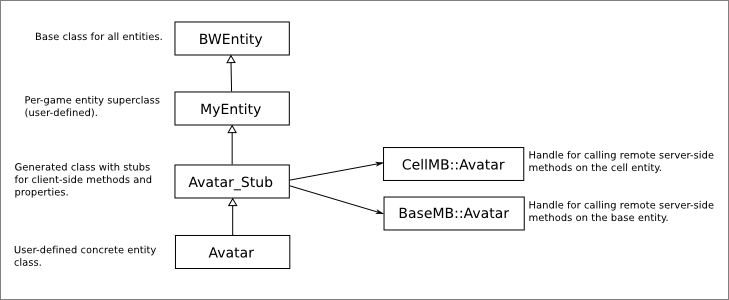

Entities are a key BigWorld concept, and involve a large system in their own right. They are the link between the client and the server and are the feature most particular to BigWorld Technology, in comparison to other 3D game systems.

This section deals only with the management aspects of entities on the client.

For details on the environment in which entity scripts are placed, and the system that supports them, see Scripting. For details on the definition of an entity, which is shared between the server and the client, see the document Server Programming Guide's section Directory Structure for Entity Scripting → The Entity Definition File.

Depending on the entity type, it can exist in different parts of BigWorld, as listed below:

Client-only

For example, a security camera prop, or an information icon. Client-only entities are created by setting World Editor's Properties' panel Client-Only attribute to true. (for details on this panel, see the document Content Tools Reference Guide 's section World Editor → Panel summary → Properties panel). Client-only entities should not have cell or base scripts.

Client and server

The entity will exist in both parts at the same position. For example, the player Avatar, NPCs, a vending machine.

Server-only

The entity will be instantiated on the server only. For example, a NPC spawn point or teleportation destination point. Server-only entities do not have any scripts on the client side.

The Entity Manager stores two lists of entities:

Active List — Contains the entities that are currently in the world, as relayed by the server or indicated by the chunk files.

Cached List — Contains the entities that have recently been in the world, but are now just outside the client's 500m radius area of interest (AoI).

Entities are cached so that if they come back into the client's AoI shortly after they have left it, the server does not have to resend all the data associated with that entity; only the fields that have changed.

Since messages may be received from the server out of order, the Entity Manager is not sensitive to their order. For example, if an entity enters the player's AoI then quickly leaves it, the BigWorld client behaves correctly even if it receives the entity's 'leave AoI' message before its 'enter AoI' message.

The Entity Manager can always determine the relative time that it should have received a message from the sequence number of the packet, since packets are sent at regular intervals.

Entity scripts on the client are Python classes that derive from the

BigWorld.Entity class. This base class exposes a number of

methods and attributes which allow the script to control the behaviour of

the entity (e.g. position, orientation, and the entity model are all

exposed via the BigWorld.Entity interface).

In addition exposing to methods and attributes, the client engine will notify the entity scripts when certain events occur via named event handlers.

See Scripting and the Client Python API reference guide for details on what methods, attributes, and event handlers are available.

Generally, each entity type require some resources in order to operate, for example models, textures, shaders, sounds, or particle systems. The BigWorld client provides a couple of ways to make sure these resources are available when the entity enters the world, avoiding stalling the main thread.

When the client starts up, it will query each entity Python module

for a function named preload. Resource names returned by

this function will be loaded on client startup and kept in memory for

the entire life-time of the client (i.e. it will be instantly available

for use at any time). This is useful for commonly used assets to avoid

potentially loading and re-loading at a later time. The tradeoff,

however, is that the client will take longer to start and will use more

memory (if the resource isn't actually being used at some point).

To use the preloads mechanism, create a global function called

preload in the relevant entity module. It must take a

single parameter which is a Python list containing resource to preload.

Modify this list in place (e.g. using list.append or list

concatenation), inserting the string names of each resource to be

preloaded by the client.

For example,

# Door.py

import BigWorld

class Door( BigWorld.Entity ):

def __init__( self ):

...

def preload( list ):

list.append( "doors/models/generic_door.model" )

list.append( "doors/maps/door_highlight.tga" )

... The type of resources which can be preloaded are,

Fonts

Textures

Shaders

Models

When an entity is about to appear in the world on the client, the

engine will execute a callback on the entity script called

prerequisites. This allows entity scripts to return a list

of resources that must be loaded before the entity may enter the world.

These resources are loaded by the loading thread, so as to not interrupt

the rendering pipeline.

It is recommended practice for an entity to expose its required

resources as pre-requisites, and load them in the method

onEnterWorld. Unlike using preloads, prerequisites do not

leak a reference, so when the entity leaves the world, it will free its

resources.

The Entity Manager calls Entity::checkPrerequisites

before allowing an entity to enter the world. This method checks whether

the pre-requisites for this entity entering the world are satisfied. If

they are not, then it starts the process of satisfying them (if not yet

started).

Note that when the prerequisites method is called on

the entity, its script has already been initialised and its properties

have been set up. The entity thus may specialise its pre-requisites list

based on the specific instance of that entity. For example:

def Door( BigWorld.Entity ):

...

def prerequisites( self ):

return [ DoorResources[ self.modelType ].modelName ]

...Table of Contents

User data objects are a way of embedding user defined data in Chunk

files. Each user data object type is implemented as a collection of Python

scripts, and an XML-based definition file that ties the scripts together.

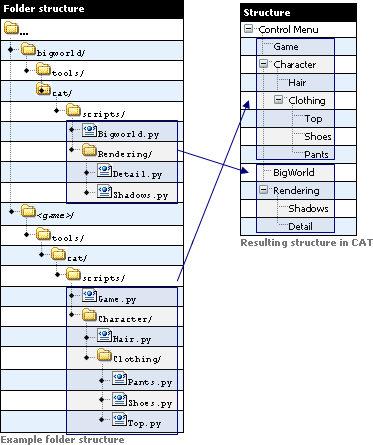

These scripts are located in the resource tree under the folder

scripts.

User data objects differ from entities in that they are immutable (i.e. their properties don't change), and that they are not propagated to other cells or clients. This makes them a lot lighter than entities.

A key feature of user data objects is their linkability. Entities are able to link to user data objects, and user data objects are able to link to other user data objects. This is achieved by including a UDO_REF property in the definition file for the user data object or entity that wishes to link to another user data object.

For more information about linking, please refer to Server Programming Guide's section User Data Object Linking With UDO_REF Properties.

For details on the definition of a user data object, which is shared between the server and the client, see the document Server Programming Guide's section Directory Structure for User Data Object Scripting → The User Data Object Definition File.

Each user data object is a Python script object (PyObject). Depending on the user data object type, it can exist in different parts of BigWorld, as listed below:

Client only

Client only user data objects are created by using the CLIENT domain in the Domain tag inside its definition file. Client-only user data objects should not have cell or base scripts

For an example of a client-only user data object, please refer to the CameraNode user data object, implemented in the

<res>/scripts/client/CameraNode.py<res>/scripts/user_data_object_defs/CameraNode.defServer only

Server only user data objects are instantiated on the server only, and will be instantiated in the cell if its Domain tag is CELL, or in the base if the Domain tag is set to BASE.

For an example of a server user data object, please refer to the PatrolNode user data object, implemented in the

<res>/scripts/cell/PatrolNode.py<res>/scripts/user_data_object_defs/PatrolNode.def

The client can access all client-only user data objects using the command:

>>> BigWorld.userDataObjects <WeakValueDictionary at 3075900908>

This will return a Python dictionary, using the user data object’s unique identifier as the key, and its PyObject representation as its value. The attributes and script methods of the user data object can be accessed using the standard dot syntax:

>>> patrolNode.patrolLinks [UserDataObject at 2358353012, UserDataObject at 2358383771]

Table of Contents

The facilities provided to scripts are of extreme importance, as they determine the generality and extensibility of the client. To a script programmer this environment is the client; just as to an ordinary user, the windowing system is the computer.

The scripting environment offers a great temptation to try to write the whole of a program in it. This can quickly make for slow and incomprehensible programs (especially if the same programming discipline is not applied to the scripting language as is to C++). Therefore, we recommend that a functionality should only be written in script when it does not need to be called at every frame. Furthermore, where it is global, it should be implemented in the personality script. So, for example, a global chat console would be implemented in the personality script, whilst a targeting system, which needs to check the collision scene at every frame, is best implemented in C++.

Importantly, the extensive integration of Python throughout BigWorld Technology allows for both rapid development of game code, and enormous flexibility.

Note

Garbage collection is disabled in BigWorld's Python integration, because garbage collection is an expensive operation that can occur at any time, blocking the main thread and causing frame rate spikes for example.

This section describes the contents and services of the (general) C++ entity, from the point of view of a script that uses these facilities. The functional components of an entity, described in the following sections are:

Entity Skeleton

Python Script Object

Model Management

Filters

Action Queue

Action Matcher

Trackers (IK)

Timers and Traps

The Entity class (entity.cpp) is the C++ container that brings together whatever components are in use for a particular entity. It is derived from Python object and intercepts certain accesses, and passes those it does not understand on to the user script. This allows scripts to call C++ functions on themselves (and other entities) transparently, using the 'self' reference. The same technique of integration has been used in the cell and base components of the server.

This class handles any housekeeping or glue required by the component classes — it is the public face of an entity as far as other C++ modules are concerned.

The data members of this class include id, type, and position.

This is the instance of the user-supplied script class. The type of the entity selects the class. It stores type-specific data defined in the XML description of the entity type, as well as any other internally used data that the script wishes to store.

When field data is sent from the server, this class has its fields automatically updated (and it is notified of the change). When messages are received from the server (or another script on the client), the message handlers are called automatically. The entity class performs this automation — it appears to be automatic from the script's point of view.

A model is BigWorld's term for a mesh, plus the animations and actions used on it.

The model management component allows an entity to manage the models that are drawn and animated at its position. Models can be attached to each other at well-defined attachment points (hard points), or they can exist independently. A model is not automatically added to the scene when it is loaded — it must be explicitly put in it.

An entity may use any number of independent (disconnected) models, but most will use zero or one. Those that use more require special filters to behave sensibly. For details, see Filters.

The best way to understand models is t`o be acquainted to their Python interface, which is described in the Client Python API's entry Main → Client → BigWorld → Classes → PyModel. For more details, see Models.

Filters take time-stamped position updates and interpolate them to produce the position for an entity at an arbitrary time.

BigWorld provides only the Filter base class. The game developer would derive game-specific filters from this. Each entity can then select one type of filter for itself from the variations available. It can dynamically change its filter if it so desires.

Whenever a movement update comes from the server, it is handed over to the selected filter, along with the time that the (existing) game time reconstruction logic calculated for that event.

The filter can also be provided with gaps in time and transform, i.e., 'at game-time x there was a forward movement of y metres and a rotation of z radians lasting t seconds'. The filter (if it is smart enough) can then incorporate this into its interpolation.

The filter can also execute script callbacks at a given time in the stream.

Filters are fully accessible from Python.

BigWorld provides access to some navigation methods by the client

scripts. BigWorld.navigatePathPoints() will

return a list of points along the path between the given source and

destination points, and

BigWorld.findRandomNeighbourPoint() and the

related

BigWorld.findRandomNeighbourPointWithRange()

will return a random point in a connected navmesh, which will be

navigable from the given point.

These methods are provided for the client in order to allow some processing to be moved away from the server, and to allow movement to be more responsive, removing the need to wait for the server to provide a path.

For more details about navigation in BigWorld, refer to the Server Programming Guide's section Navigation System.

If the client-side navigation methods are not used, loading the

navmeshes would cause unnecessary additional memory usage (usually

10-50mb). For this reason, navigation meshes will not be loaded by

default. Each space that will use navigation must be configured to do

so. Add the following section to the

space.settings file of any space that will use

client-side navigation:

<clientNavigation>

<enable> true </enable>

</clientNavigation>This will ensure that the client will load any navigation meshes stored with the chunk data.

For more details about this space.settings

option, see the File Grammar Guide's section

space.settings.

Note

If client-side navigation is not enabled for a space, then ResPacker will strip the navigation meshes from the cdata files for the client package. This is to keep the chunk resource files as small as possible for distribution.

BigWorld.navigatePathPoints() will take

source and destination points, and return a path of points between

them, such that moving to each in turn will result in an entity

successfully navigating to the destination.

The method has the following syntax:

navigatePathPoints( src, dst, maxSearchDistance, girth )

The arguments are as follows:

src

Vector3 containing the source point in the current space.

dest

Vector3 containing the destination point in the current space.

maxSearchDistance

float containing the maximum distance that will be searched for a path, from the source point. This deafaults to 500.

girth

float containing the navigation girth grid to use. This defaults to 0.5 if not supplied. The girth is the minimum width of the path, and is used to ensure that large entities can not navigate through areas that are too narrow for them. This value must correspond to one of the girth entries in the girths file, which is located at

bigworld/res/helpers/girths.xmlby default.

BigWorld.navigatePathPoints() returns a

list of Vector3 points containing the path.

If a path can not be found it will raise an exception.

For example, the following call will return a list of points along the path between (100,10,200) and (150,20,50) if it exists. The path must be at least 4m wide at all points, and must be less than 300m in total:

BigWorld.navigatePathPoints( (100, 10, 200), (150, 20, 50), 300, 4 )

The path will be returned as a list:

[(100,10,200), (121,3,148), (133,9,87), (144,8,61), (150,20,50)]

BigWorld.findRandomNeighbourPoint()

will take a source point, and return a random point in a connected

navmesh. The resulting point is guaranteed to be connected to the

source.

The method has the following syntax:

findRandomNeighbourPoint( position, radius, girth )

The arguments are as follows:

position

Vector3 containing the source point in the current space.

radius

float containing the maximum distance that will be searched for a path, from the source point.

girth

float containing the navigation girth grid to use. This defaults to 0.5 if not supplied. The girth is the minimum width of the path, and is used to ensure that large entities can not navigate through areas that are too narrow for them. This value must correspond to one of the girth entries in the girths file, which is located at

bigworld/res/helpers/girths.xmlby default.

BigWorld.findRandomNeighbourPoint()

returns a Vector3 containing the random point. If an

appropriate point can not be found it will raise an exception.

For example, the following call will return a random point within 300m of the point (100,10,200). The path to this point must be at least 0.5m wide at all points.

BigWorld.findRandomNeighbourPoint( (100, 10, 200), 300, 0.5 )

This method performs the same functionality as

BigWorld.findRandomNeighbourPoint(), but

takes an additional argument specifying a minimum radius. This allows

the caller to specify a specific range for the distance to the random

point.

The method has the following syntax:

findRandomNeighbourPoint( position, minRadius, maxRadius, girth )

The arguments are as follows:

position

Vector3 containing the source point in the current space.

minRadius

float containing the minimum distance that will be searched for a path, from the source point.

maxRadius

float containing the maximum distance that will be searched for a path, from the source point.

girth

float containing the navigation girth grid to use. This defaults to 0.5 if not supplied. The girth is the minimum width of the path, and is used to ensure that large entities can not navigate through areas that are too narrow for them. This value must correspond to one of the girth entries in the girths file, which is located at

bigworld/res/helpers/girths.xmlby default.

BigWorld.findRandomNeighbourPoint()

returns a Vector3 containing the random point. If an

appropriate point can not be found it will raise an exception.

For example, the following call will return a random point further than 200m, but within 400m of the point (150, 20, 50). The path to this point must be at least 4m wide at all points.

BigWorld.findRandomNeighbourPoint( (150, 20, 50), 200, 400, 4 )

The action queue is the structure within the BigWorld Technology framework that controls the queue of actions in effect on a model (actions are wrapped by ActionQueuer objects that are contained by the action queue).

The ActionQueue deals with the combining of layers and also applying the appropriate blend in and blend out times.

The ActionQueue also deals with any scripted callback functions that are linked to the playing of an action, like for example calling sound-playing callbacks at particular frames in an action.

An action is described in XML as illustrated the example

.model file below (model files are located in any of

the various sub-folders under the resource tree

<res> , such as for

example,

<res>/environments,

<res>/flora,

<res>/sets/vehicles,

etc...):