Table of Contents

The Fault Tolerance system in BigWorld Technology is designed to transparently cope with the loss of a machine due to physical, electrical, or logical fault.

The game systems in general should not be affected, and the game experience will continue normally for most clients, with possibly a brief interruption for clients closest to the faulty machine.

The loss of any single machine (running a single process) is always handled. The loss of multiple machines in a short time frame may not always be adequately handled. In such extreme cases — for example, a complete power outage affecting all machines — the Disaster Recovery system will be invoked. For more details, see Disaster Recovery.

Note that, in spite of handling failures, the Fault Tolerance system should not be relied upon to cover up a software bug that causes a component to crash. All such bugs should be found and fixed in the source code. If the bug is believed to be in BigWorld Technology code (and not in a customer's extension), then contact BigWorld Support with details of the problem. For more details, see First Aid After a Crash.

CellApp fault tolerance works by backing up the cell entities to their base entities.

As long as a backup period is specified for the cell entities, the fault tolerance for the CellApp processes is automatic. An operator should ensure that there is enough spare capacity in available CellApps to take up the load of a lost process.

For details on how to specify the CellApp backup period, see CellApp Configuration Options.

Note

Cell entities without a base entity are not backed up, and therefore will not be restored if their process is lost.

Note

Since cell entities back up to base entities, running CellApp and BaseApp processes on the same machine should be avoided. If the entire machine is lost, this level of fault tolerance will not work.

For more details on the implementation of CellApp fault tolerance on code level, see the document Server Programming Guide's chapter Fault Tolerance.

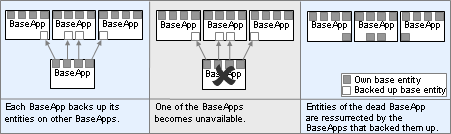

The BaseApp supports a BaseApp level fault-tolerance by backing each base entity to another BaseApp via a hashing scheme.

For more details, see the document Server Overview's section Server Components→ BaseApp→BaseApp. For details on the implementation of BaseApp fault tolerance on code level, see the document Server Programming Guide's chapter Fault Tolerance.

Each BaseApp is assigned a set of other BaseApps and a hash function from an entity's ID to one of these backups. Over a period of time, all entities are backed up. This is then repeated. If a BaseApp dies, then the entities are restored on to the appropriate backup.

There is a possibility of base entities that were previously on the same BaseApp ending up on different BaseApps (which places limits on scripting).

In addition, any attached clients will be disconnected on an unexpected BaseApp failure, requiring a re-login. However, if BaseApps are retired, attached clients will be migrated to another running BaseApp.

Distributed BaseApp Backup — Dead BaseApp's entities can be restored to Backup BaseApp

Each Service will typically be split among multiple ServiceApps. Since Services are stateless, no data loss will occur from a ServiceApp failure. For this reason it is not necessary for Services to be backed up.

When a BaseApp script makes use of a Service, it gets a mailbox from

the BigWorld.services map. This returns a random mailbox of

one of the Service's fragments. Losing a single fragment will only cause

the remaining fragments to be chosen more frequently.

In some cases, a script may hold on to a Service Fragment's mailbox.

If the ServiceApp to which this mailbox refers happens to crash, it is

automatically redirected to an alternate ServiceApp fragment mailbox. The

BWPersonality.onServiceAppDeath callback is also

called.

A Service's users will be evenly distributed among the ServiceApps currently offering it.

Fault tolerance for BaseAppMgr, CellAppMgr, DBMgr, and LoginApp is provided by the Reviver, by starting a new instance of the process to replace the unavailable one.

Although it is possible for one Reviver to watch all processes, it is recommended to run a multiple Reviver instances on different machines as Revivers normally stop after reviving a process.

For more details on Reviver, see the Server Overview's chapters Design Introduction and Server Components.

When the Reviver starts, it queries the local BWMachined process,

and will only support the components that have an entry in a special

category called Components in the machine's

configuration file /etc/bwmachined.conf.

An example /etc/bwmachined.conf specifying

that the Reviver should support all singleton server components would

contain a section as below:

[Components] baseApp baseAppMgr cellApp cellAppMgr dbMgr loginApp

Note

If the [Components] category does not contain any entries, then Reviver will support all singleton server components.

BaseApp and CellApp will not be restarted by Reviver — the [Components] entries are used by WebConsole and control_cluster.py to determine which processes should be started by BWMachined on that host.

Be aware that the configuration file is only read when BWMachined starts. As such if you many any changes to the configuration file, you will need to restart BWMachined before the changes are recognised. To restart BWMachined run the following command as the root user:

# /etc/init.t/bwmachined2 restart

As a Reviver process is responsible for restarting a dead server process as a once off task before shutting down, a mis-configured Reviver layout may result in a situation where your cluster can suffer a process outage that is not handled by the available Revivers.

As a worst case scenario consider a server cluster that hosts the BaseAppMgr and CellAppMgr processes on a machine with the hostname gameworld and a single Reviver process on a backup machine with the hostname gamereviver. If the machine gamereviver has been configured to revive both the BaseAppMgr and CellAppMgr processes and gameworld suffers a power failure, only one process will be revived on gamereviver.

For this reason BigWorld recommends that you run enough Reviver instances so that each process is responsible for one singleton process. Using this approach should result in fault tolerance layout that avoids gaps in your cluster revival plan.

The supported components can also be specified via command-line (even though we recommend that you use /etc/bwmachined.conf for that), as below:

reviver [--add|--del {baseAppMgr|cellAppMgr|dbMgr|loginApp} ]If reviver is invoked with no options, then it will try to monitor all singleton processes specified in category Components of /etc/bwmachined.conf.

The options for invoking reviver are described in the list below:

-

--add { baseAppMgr | cellAppMgr | dbMgr | loginApp }

Starts the Reviver, trying to monitor only the components specified in this option. That means that the components list in /etc/bwmachined.conf will be ignored.

-

--del { baseAppMgr | cellAppMgr | dbMgr | loginApp }

Starts the Reviver, trying to monitor all components specified in the list in /etc/bwmachined.conf, except the ones specified in this option.

-

To start Reviver trying to monitor all processes specified in Components in /etc/bwmachined.conf:

reviver

-

To start Reviver trying to monitor only DBMgr and LoginApp:

reviver --add dbMgr --add loginApp

-

To start Reviver trying to monitor all processes specified in Components in

/etc/bwmachined.conf, except DBMgr and LoginApp:reviver -del dbMgr -del loginApp